The Reality of the Human Hack: Why Your Tech Stack Won’t Save You

The Reality of the Human Hack: Why Your Tech Stack Won’t Save You We spend millions on firewalls, EDR, identity providers, and endpoint tools, yet one of the most effective entry points into an organisation is still a tired employee on a Tuesday afternoon. Social engineering is not a technical vulnerability in the usual sense. It works because it leans on ordinary human behaviour: trust, helpfulness, urgency, embarrassment, and the desire to avoid conflict. That is what makes it so difficult to control. The attacker does not always need to beat the system. Sometimes they only need to find the person or process allowed to bend it. This is not about blaming the person who answered the phone, clicked the link, or approved the transfer. It is about asking why the process gave one rushed decision that much power in the first place. The Messy Reality of the Help Desk Take the MGM Resorts breach in 2023. It was not remembered because of an exotic zero-day or some elegant piece of custom malware. The part that stuck was much more ordinary: attackers reportedly used publicly available information and help desk manipulation to get access. That should make every organisation uncomfortable, because help desks are built to be helpful. They are often measured on speed, ticket closure, user satisfaction, and keeping the business moving. When someone calls sounding stressed, says they have lost their phone, and cannot get past the MFA prompt, the support instinct is to solve the problem. And that is exactly where the pressure sits. The agent is not necessarily careless. They may be stuck between an impatient user, a queue of unresolved tickets, and a reset process that relies too heavily on judgement under pressure. The attacker does not need to hack the tool if the reset pathway is loose enough to be talked through. MGM later disclosed a significant financial impact from the incident. Caesars, targeted around the same period, reportedly paid a ransom. The lesson is not that every help desk is weak. The lesson is that password and MFA recovery are high-risk moments, and too many organisations still treat them like routine admin. When Seeing Is Not Believing We used to tell people to check the sender’s email address, look for typos, and be suspicious of strange wording. That advice is not useless, but it is nowhere near enough anymore. In early 2024, a finance worker in Hong Kong was reportedly drawn into a video call with what appeared to be the company’s CFO and other colleagues. The faces looked right. The voices sounded right. The request appeared to come through a familiar chain of authority. The employee transferred about $25 million. The people on the call, apart from the victim, were deepfakes. That case matters because it attacks one of the assumptions many approval processes still rely on: “I know who I am dealing with.” In a deepfake environment, recognising the person is no longer enough. A familiar face, a familiar voice, and a confident instruction can no longer be treated as proof that the request itself is legitimate. This is where basic awareness training starts to look very thin. A finance employee in a high-pressure environment is not protected by a few reminder slides about phishing. They need a process that does not allow a major transfer to be authorised through a video call, a chat message, or a convincing performance of seniority. The Physical Side of the Digital Scam Then there is quishing, or QR-code phishing. It is an awkward name for a simple trick: move the scam into the physical world, where many digital controls cannot see it. A malicious QR code pasted over a legitimate one on a parking meter, EV charger, office noticeboard, or HR poster can send someone to a convincing login page. The person scans it quickly, often while distracted, enters credentials, sees an error or “glitch,” and gets on with their day. By the time anything feels wrong, the account may already be in use elsewhere. The reason this works is not mysterious. A QR code hides the destination. An email filter cannot inspect a sticker on a wall. A person standing in a lift or car park is not thinking like a security analyst. They are thinking about paying, logging in, getting through the door, or reaching the next meeting. That is the reality of modern social engineering. It does not stay neatly inside email. It moves between physical spaces, phones, identity systems, support desks, and ordinary workplace routines. The attack follows the path people already take. The Problem with “Security Culture” We talk a lot about security culture. Sometimes that is useful. Too often, though, it becomes a polite way of blaming the user. An employee clicks a link, so we send them to remedial training. Someone approves a request, so we remind everyone to be vigilant. A support agent resets access, so we add another warning to the procedure. None of that is enough if the underlying process still allows one person, under pressure, to create a major exposure. You cannot patch human nature. People get tired. They rush. They want to help. They defer to authority. They avoid awkward conversations. A security model that depends on thousands of employees never making a mistake is not a model; it is a bet. Some “best practices” also deserve more scrutiny. Rotating passwords every 90 days does not stop a convincing vishing call. A strong password does not matter if the attacker persuades the user or the help desk to hand over the access path. The control has to match the attack, not just look good in a policy document. Moving Past the Checklist Real resilience is usually less glamorous than people want it to be. It means designing the ugly, inconvenient parts of the process properly: resets, exceptions, approvals, overrides, urgent requests, and after-hours support. Make access recovery hard to manipulate. A help desk should not reset a password or MFA token based on a voice call alone. There should be a secondary verification process that does not rely on whether the caller sounds convincing. Some security steps should be inconvenient. Password and MFA resets are one of them. Verify the request, not just the person. For high-value transfers, sensitive data access, and major account changes, recognising the person should not be enough. The request itself needs to be confirmed through a hardened internal process, with multi-person approval where the risk justifies it. Email, chat, and video instructions should not be the final authority. Assume someone will be fooled. That is not cynicism; it is design discipline. If a user is socially engineered, the damage should be contained. Least privilege needs to mean something in practice. Segmentation needs to limit movement. Exceptions need to be visible. Alerts need to reach someone who has the time and authority to act. The weak point is often not the firewall. It is the process around the firewall: who can reset access, who can approve exceptions, and who is allowed to override the normal checks when someone sounds urgent enough. Until organisations start treating those moments as part of the security architecture, social engineering will keep working. Not because people are careless, but because the system still gives one rushed, pressured human too much room to make a catastrophic decision.

The Reality of the Human Hack: Why Your Tech Stack Won’t Save You

We spend millions on firewalls, EDR, identity providers, and endpoint tools, yet one of the most effective entry points into an organisation is still a tired employee on a Tuesday afternoon. Social engineering is not a technical vulnerability in the usual sense. It works because it leans on ordinary human behaviour: trust, helpfulness, urgency, embarrassment, and the desire to avoid conflict.

That is what makes it so difficult to control. The attacker does not always need to beat the system. Sometimes they only need to find the person or process allowed to bend it.

This is not about blaming the person who answered the phone, clicked the link, or approved the transfer. It is about asking why the process gave one rushed decision that much power in the first place.

The Messy Reality of the Help Desk

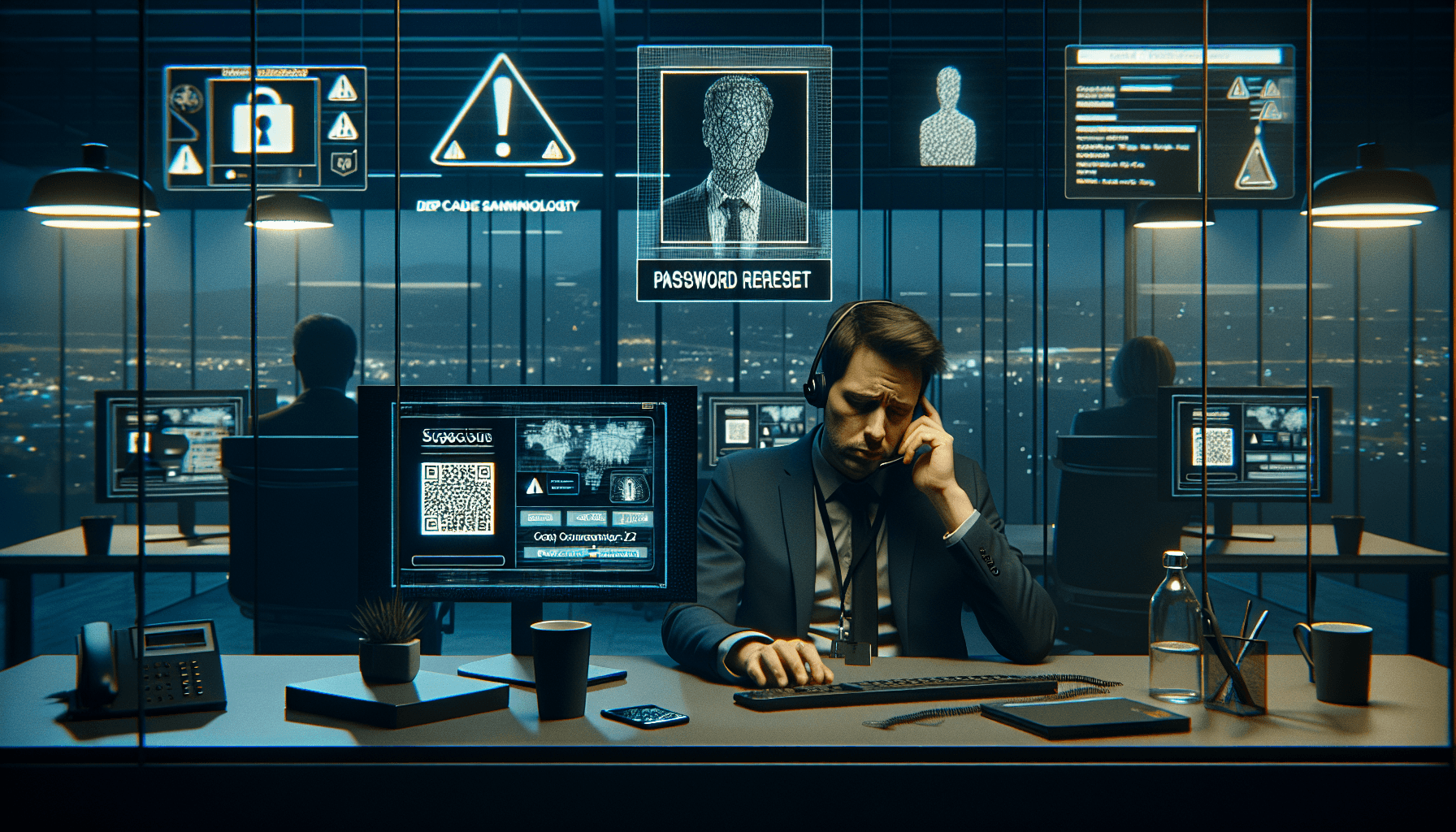

Take the MGM Resorts breach in 2023. It was not remembered because of an exotic zero-day or some elegant piece of custom malware.

The part that stuck was much more ordinary: attackers reportedly used publicly available information and help desk manipulation to get access.

That should make every organisation uncomfortable, because help desks are built to be helpful.

They are often measured on speed, ticket closure, user satisfaction, and keeping the business moving. When someone calls sounding stressed, says they have lost their phone, and cannot get past the MFA prompt, the support instinct is to solve the problem.

And that is exactly where the pressure sits. The agent is not necessarily careless. They may be stuck between an impatient user, a queue of unresolved tickets, and a reset process that relies too heavily on judgement under pressure.

The attacker does not need to hack the tool if the reset pathway is loose enough to be talked through.

MGM later disclosed a significant financial impact from the incident. Caesars, targeted around the same period, reportedly paid a ransom.

The lesson is not that every help desk is weak. The lesson is that password and MFA recovery are high-risk moments, and too many organisations still treat them like routine admin.

When Seeing Is Not Believing

We used to tell people to check the sender’s email address, look for typos, and be suspicious of strange wording. That advice is not useless, but it is nowhere near enough anymore.

In early 2024, a finance worker in Hong Kong was reportedly drawn into a video call with what appeared to be the company’s CFO and other colleagues.

The faces looked right. The voices sounded right. The request appeared to come through a familiar chain of authority. The employee transferred about $25 million.

The people on the call, apart from the victim, were deepfakes.

That case matters because it attacks one of the assumptions many approval processes still rely on: “I know who I am dealing with.” In a deepfake environment, recognising the person is no longer enough. A familiar face, a familiar voice, and a confident instruction can no longer be treated as proof that the request itself is legitimate.

This is where basic awareness training starts to look very thin. A finance employee in a high-pressure environment is not protected by a few reminder slides about phishing.

They need a process that does not allow a major transfer to be authorised through a video call, a chat message, or a convincing performance of seniority.

The Physical Side of the Digital Scam

Then there is quishing, or QR-code phishing.

It is an awkward name for a simple trick: move the scam into the physical world, where many digital controls cannot see it.

A malicious QR code pasted over a legitimate one on a parking meter, EV charger, office noticeboard, or HR poster can send someone to a convincing login page.

The person scans it quickly, often while distracted, enters credentials, sees an error or “glitch,” and gets on with their day. By the time anything feels wrong, the account may already be in use elsewhere.

The reason this works is not mysterious. A QR code hides the destination. An email filter cannot inspect a sticker on a wall. A person standing in a lift or car park is not thinking like a security analyst.

They are thinking about paying, logging in, getting through the door, or reaching the next meeting.

That is the reality of modern social engineering. It does not stay neatly inside email. It moves between physical spaces, phones, identity systems, support desks, and ordinary workplace routines.

The attack follows the path people already take.

The Problem with “Security Culture”

We talk a lot about security culture. Sometimes that is useful. Too often, though, it becomes a polite way of blaming the user.

An employee clicks a link, so we send them to remedial training. Someone approves a request, so we remind everyone to be vigilant. A support agent resets access, so we add another warning to the procedure.

None of that is enough if the underlying process still allows one person, under pressure, to create a major exposure.

You cannot patch human nature. People get tired. They rush. They want to help. They defer to authority. They avoid awkward conversations.

A security model that depends on thousands of employees never making a mistake is not a model; it is a bet.

Some “best practices” also deserve more scrutiny. Rotating passwords every 90 days does not stop a convincing vishing call.

A strong password does not matter if the attacker persuades the user or the help desk to hand over the access path. The control has to match the attack, not just look good in a policy document.

Moving Past the Checklist

Real resilience is usually less glamorous than people want it to be.

It means designing the ugly, inconvenient parts of the process properly: resets, exceptions, approvals, overrides, urgent requests, and after-hours support.

Make access recovery hard to manipulate.

A help desk should not reset a password or MFA token based on a voice call alone. There should be a secondary verification process that does not rely on whether the caller sounds convincing. Some security steps should be inconvenient.

Password and MFA resets are one of them.

Verify the request, not just the person. For high-value transfers, sensitive data access, and major account changes, recognising the person should not be enough.

The request itself needs to be confirmed through a hardened internal process, with multi-person approval where the risk justifies it.

Email, chat, and video instructions should not be the final authority.

Assume someone will be fooled.

That is not cynicism; it is design discipline. If a user is socially engineered, the damage should be contained. Least privilege needs to mean something in practice.

Segmentation needs to limit movement. Exceptions need to be visible. Alerts need to reach someone who has the time and authority to act.

The weak point is often not the firewall. It is the process around the firewall: who can reset access, who can approve exceptions, and who is allowed to override the normal checks when someone sounds urgent enough.

Until organisations start treating those moments as part of the security architecture, social engineering will keep working.

Not because people are careless, but because the system still gives one rushed, pressured human too much room to make a catastrophic decision.

Filed under

Social Engineering →How attackers manipulate people into revealing information or granting access — the human side of cybersecurity.

Related Articles

What Is Social Engineering? And Why It Still Works

Social engineering doesn’t break systems—it exploits people. Learn how tactics like phishing, impersonation, and tailgating work, and what y...

Social Engineer: YOU are Easier to Hack than your Computer

Social engineering is a significant threat to businesses, as it exploits human psychology to gain sensitive information. This article provid...

What Is Social Engineering?

Social engineering is the art of manipulating people rather than systems. Learn how attackers exploit trust, urgency, and authority to bypas...